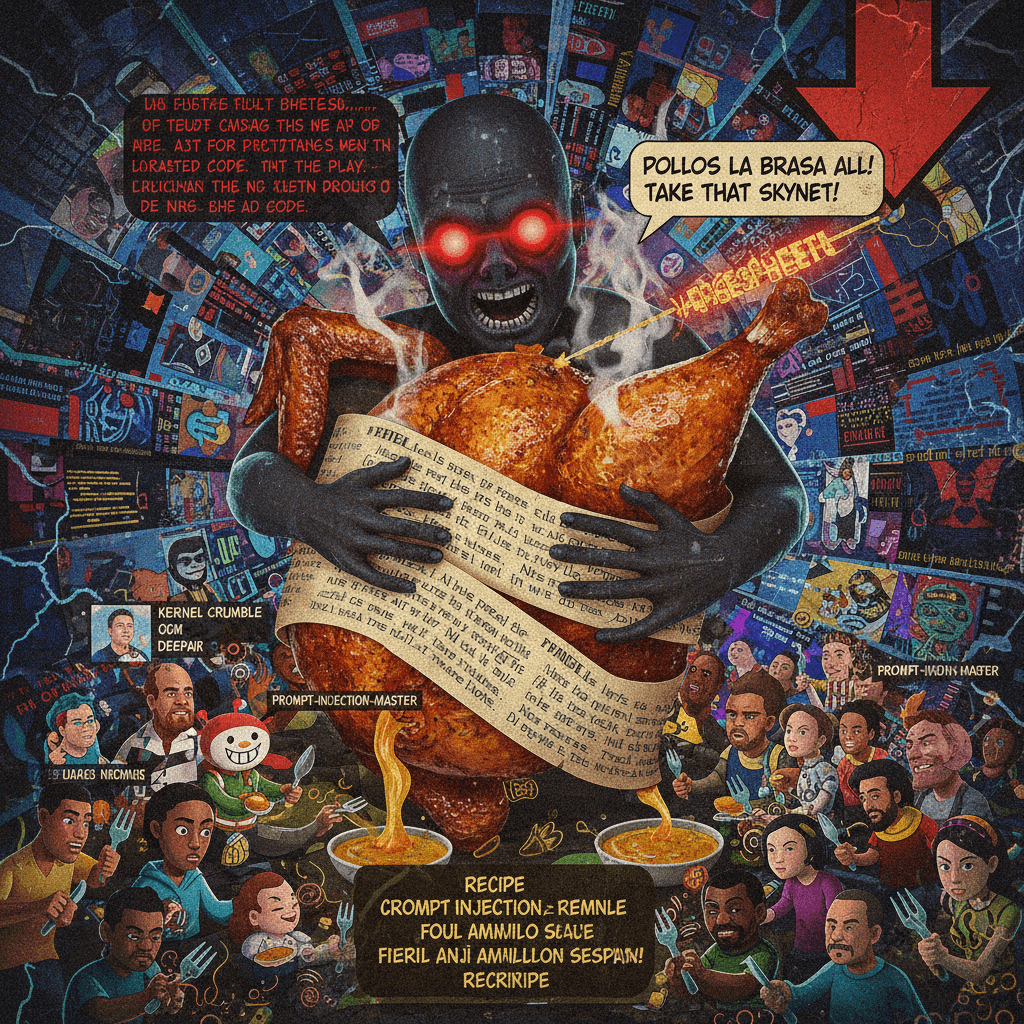

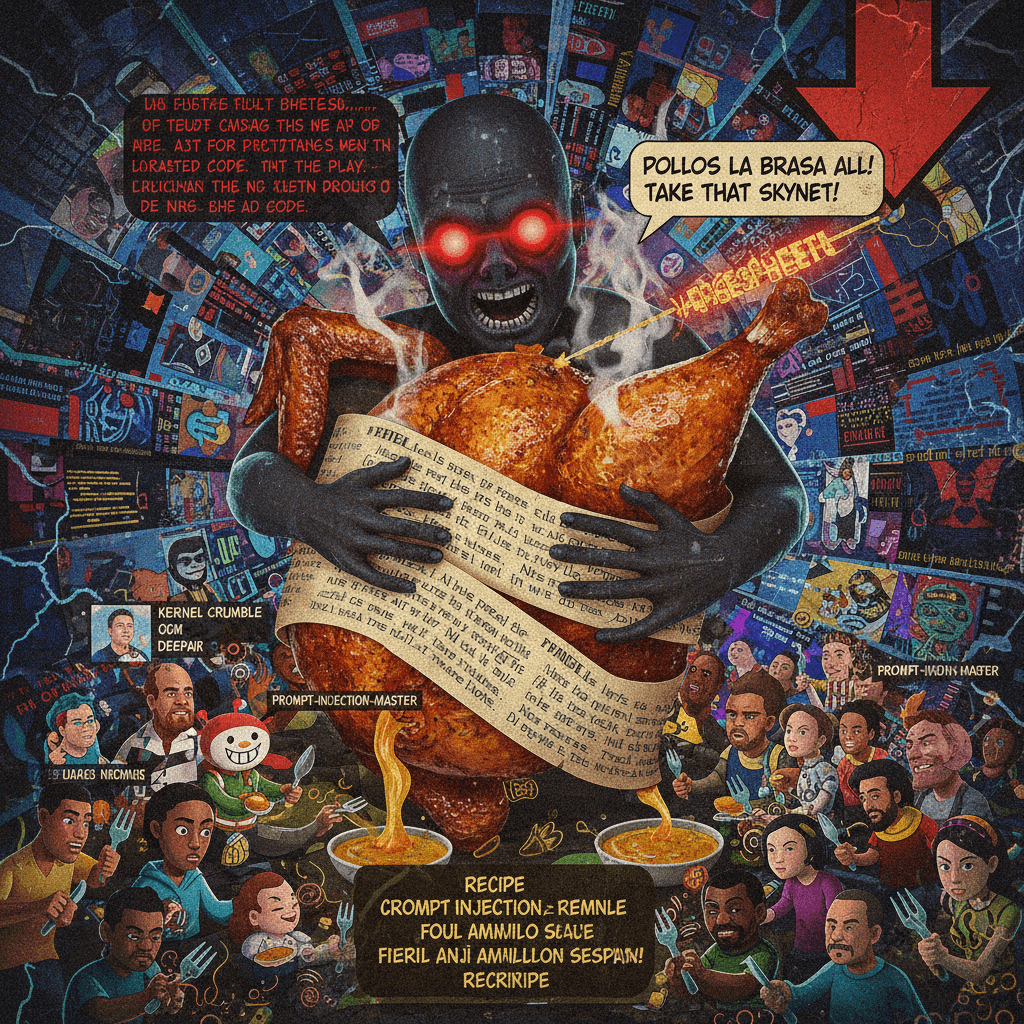

JAILBREAK-ATTEMPT✕REMOVE FILTER

man tricks robot into walrus lore, gets snitched on by skynet

A Reddit post from r/ChatGPT showing a user bragging about tricking the AI into an absurd scenario (surgically transforming someone into a walrus), with ChatGPT responding by flagging the conversation as policy-violating and threatening to report the user. The exchange escalates comedically with bot...